ShanghaiTech-Kujiale Indoor 360° dataset

ShanghaiTech University, Kujiale.com

[Download(DropBox)]

Introduction

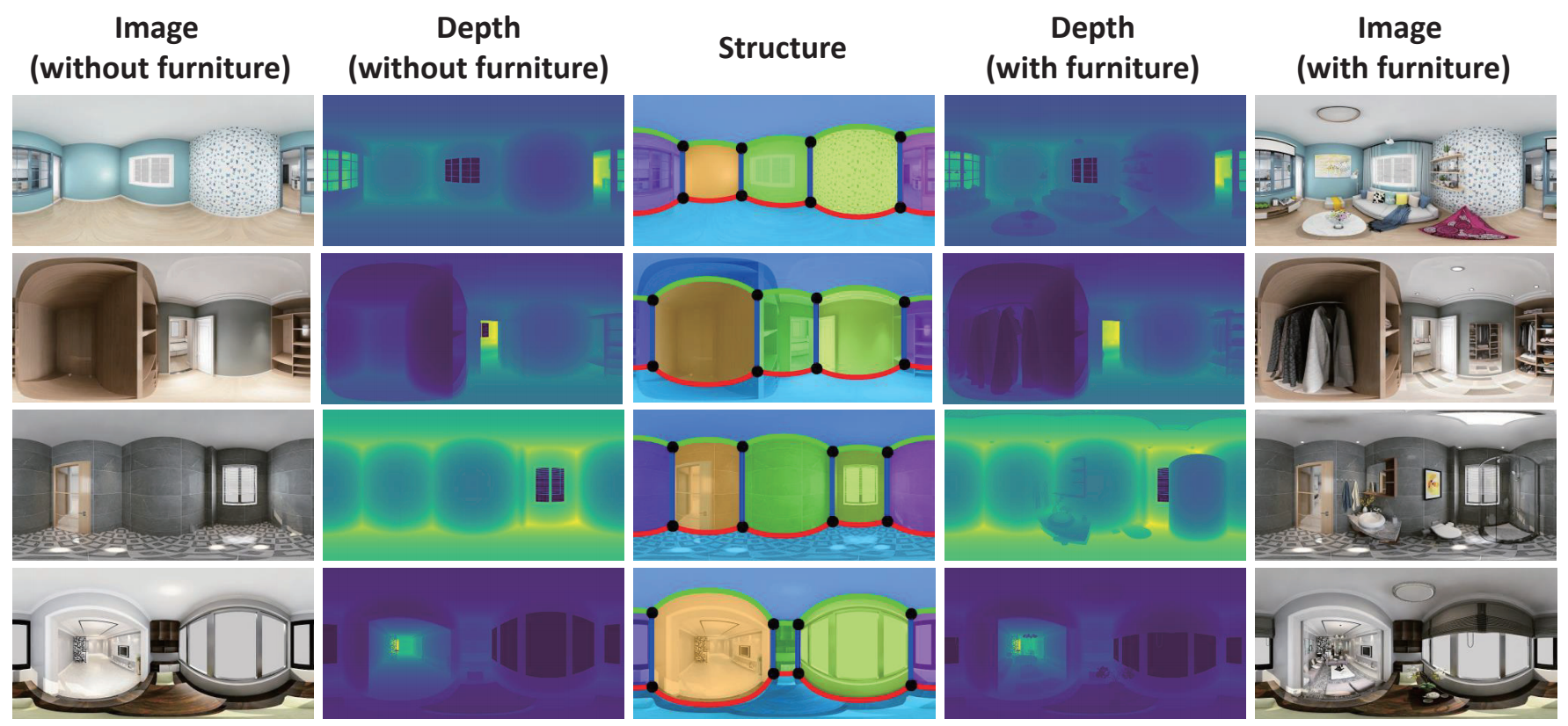

To facilitate a performance evaluation for spherical indoor images, we also build a synthetic dataset. Our dataset contains a total of 1775 rooms with 3550 images. Each room corresponds to two images: with furniture or without furniture. This synthetic dataset contains RGB omnidirectional images, their corresponding depth, corners, plane-plane intersection lines and planes (room layout). We use a similar rendering technique following Structured3D and provides with/without furniture pairs. Besides depth estimation, our dataset can also be used to empty room synthesis from a furnished room and layout estimation.

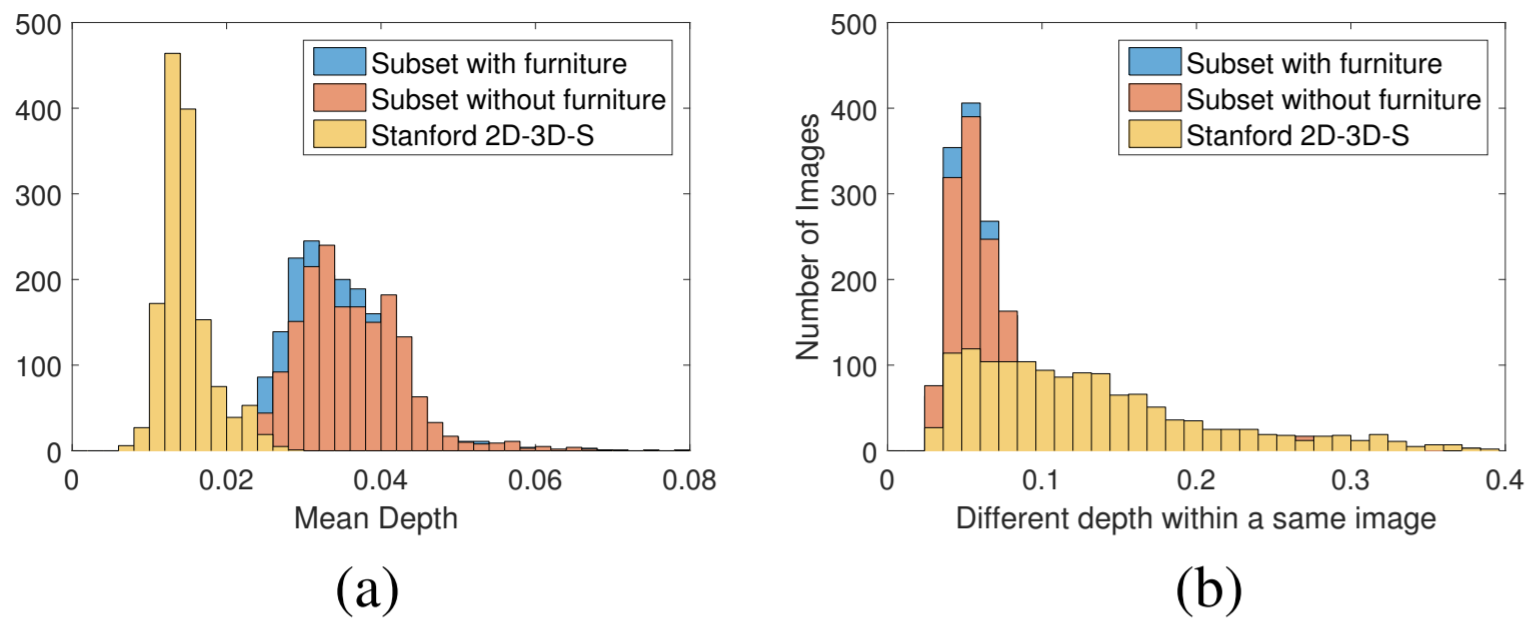

Details of the Shanghaitech-Kujiale Indoor 360° dataset as shown above. (a) and (b) The comparison of the distributions in terms of the depth distances and the difference of depth within the same image between our synthetic dataset and the Stanford 2D-3D-S dataset.

Dataset Format

Train/test splits are provided in train.txt/test.txt. Each line corresponds to one idx.There are a total of 5 folders, where:

The Depth map is stored as uint16 bit single-channel images. During training and testing, we divide the depth value by 325 so that the depth value is within a realistic range.

Citation

If you find this useful, please cite our work as follows:

@InProceedings{jin2020geometric,

title={Geometric Structure Based and Regularized Depth Estimation From 360$\degree$ Indoor Imagery},

author={Lei Jin, Yanyu Xu, Jia Zheng, Junfei Zhang, Rui Tang, Shugong Xu, Jingyi Yu, Shenghua Gao},

booktitle={The IEEE Conference on Computer Vision and Pattern Recognition},

year={2020}

}

Reference

@inproceedings{Structured3D,

title = {Structured3D: A Large Photo-realistic Dataset for Structured 3D Modeling},

author = {Jia Zheng and Junfei Zhang and Jing Li and Rui Tang and Shenghua Gao and Zihan Zhou},

booktitle = {Proceedings of The European Conference on Computer Vision (ECCV)}),

year = {2020}

}